How to Let Docker Containers Control Other Containers (Without Docker-in-Docker)

Learn how to enable Docker containers to manage other containers by mounting the Docker socket, avoiding the complexity of Docker-in-Docker setups. This tutorial provides a complete working example and discusses security considerations.

How to Let Docker Containers Control Other Containers (Without Docker-in-Docker)

Ever needed one container to manage, inspect, or execute commands in other containers? Maybe you’re building an admin dashboard, a CI/CD tool, or a backup system that needs to interact with your database container.

The naive approach is Docker-in-Docker (DinD) - running a full Docker daemon inside a container. But there’s a simpler, more efficient solution: Docker socket mounting.

In this post, I’ll show you exactly how it works with a complete working example you can run on your machine.

The Problem

Imagine you have a web-based admin tool (let’s call it “Toolbox”) that needs to:

- Run database backups:

docker exec postgres pg_dump ... - Check container health:

docker inspect postgres - View logs:

docker logs api-server

Your Toolbox runs in its own container. How does it execute these Docker commands?

Two Approaches

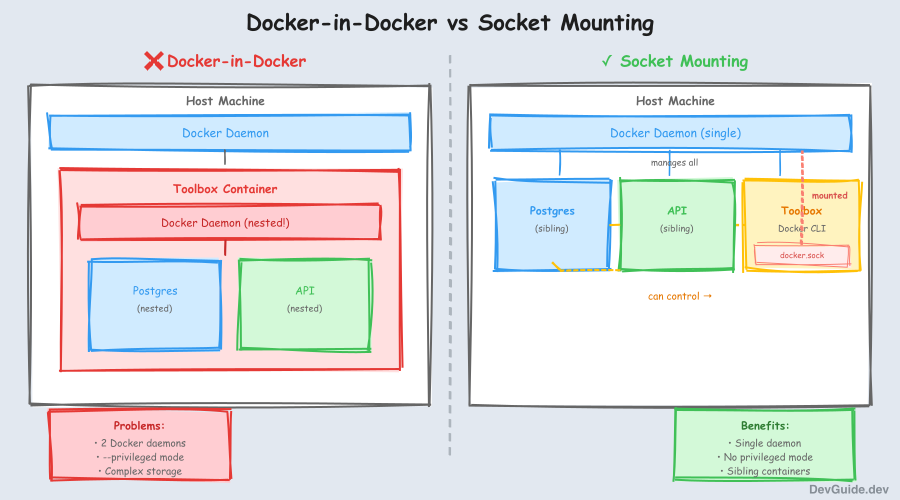

Approach 1: Docker-in-Docker (DinD)

Run a complete Docker daemon inside your container. This creates a nested structure where your Toolbox container runs its own Docker daemon, and any containers it creates live inside that nested environment.

Problems:

- Resource overhead (two Docker daemons)

- Complex storage management

- Security implications of

--privilegedmode - Your other containers (Postgres, API) must run inside the nested Docker

Approach 2: Docker Socket Mounting (Recommended)

Share the host’s Docker socket with your container. This lets your Toolbox container talk directly to the host’s Docker daemon, making all containers siblings at the same level.

Benefits:

- Single Docker daemon (no overhead)

- Toolbox controls sibling containers, not nested ones

- Simple volume mount - no privileged mode

- All containers are peers on the same network

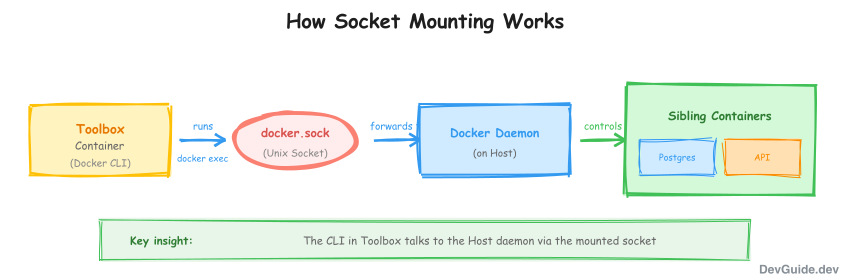

How Docker Socket Mounting Works

The Docker CLI doesn’t contain the Docker engine - it’s just a client that sends commands to the Docker daemon via a Unix socket at /var/run/docker.sock.

When you mount this socket into a container:

volumes:

- /var/run/docker.sock:/var/run/docker.sockThe container’s Docker CLI talks to the host’s Docker daemon. Commands like docker ps show the host’s containers, and docker exec postgres ... executes in sibling containers.

Complete Working Example

Let’s build a minimal example: a “controller” container that can execute commands in a “worker” container.

Project Structure

docker-socket-demo/

├── Makefile

├── docker-compose.yml

├── controller/

│ ├── Dockerfile

│ └── app.sh

└── worker/

└── DockerfileStep 1: Create the Directory Structure

mkdir -p docker-socket-demo/controller docker-socket-demo/worker

cd docker-socket-demoStep 2: Create the Worker Container

This is a simple container that just runs and waits. The controller will execute commands inside it.

worker/Dockerfile:

FROM alpine:3.19

# Install some tools the controller might want to use

RUN apk add --no-cache bash curl

# Keep container running

CMD ["tail", "-f", "/dev/null"]Step 3: Create the Controller Container

This container has Docker CLI installed and will control the worker.

controller/Dockerfile:

FROM alpine:3.19

# Install Docker CLI (not the daemon, just the client)

RUN apk add --no-cache docker-cli bash

# Copy our demo script

COPY app.sh /app.sh

RUN chmod +x /app.sh

CMD ["/app.sh"]controller/app.sh:

#!/bin/bash

echo "========================================"

echo " Docker Socket Mounting Demo"

echo "========================================"

echo ""

# Wait for worker to be ready

sleep 2

echo "1. Listing all containers (from inside the controller container):"

echo "----------------------------------------------------------------"

docker ps --format "table {{.Names}}\t{{.Status}}\t{{.Image}}"

echo ""

echo "2. Executing a command in the worker container:"

echo "------------------------------------------------"

docker exec worker hostname

echo ""

echo "3. Creating a file in the worker container:"

echo "--------------------------------------------"

docker exec worker sh -c 'echo "Hello from controller!" > /tmp/message.txt'

docker exec worker cat /tmp/message.txt

echo ""

echo "4. Getting worker container's environment:"

echo "-------------------------------------------"

docker exec worker env | head -5

echo ""

echo "5. Running a multi-step operation in worker:"

echo "---------------------------------------------"

docker exec worker sh -c '

echo "Step 1: Current directory is $(pwd)"

echo "Step 2: Creating test data..."

echo "test data $(date)" > /tmp/test.txt

echo "Step 3: Verifying..."

cat /tmp/test.txt

'

echo ""

echo "========================================"

echo " Demo Complete!"

echo "========================================"

echo ""

echo "Key insight: The controller container executed commands"

echo "in a SIBLING container (worker), not a nested one."

echo ""

echo "This works because /var/run/docker.sock is mounted,"

echo "allowing the controller to talk to the host's Docker daemon."

# Keep container running for inspection

tail -f /dev/nullStep 4: Create docker-compose.yml

docker-compose.yml:

services:

# The worker container - a simple container we want to control

worker:

build: ./worker

container_name: worker

# The controller container - has Docker CLI and can control worker

controller:

build: ./controller

container_name: controller

depends_on:

- worker

volumes:

# THIS IS THE KEY: Mount the Docker socket

- /var/run/docker.sock:/var/run/docker.sockStep 5: Create the Makefile

Makefile:

.PHONY: build run logs clean demo shell-controller shell-worker help

# Default target

help:

@echo "Docker Socket Mounting Demo"

@echo ""

@echo "Usage:"

@echo " make demo - Build and run the complete demo"

@echo " make build - Build the containers"

@echo " make run - Start the containers"

@echo " make logs - View controller logs (shows demo output)"

@echo " make clean - Stop and remove containers"

@echo ""

@echo "Interactive:"

@echo " make shell-controller - Open shell in controller container"

@echo " make shell-worker - Open shell in worker container"

# Build containers

build:

docker-compose build

# Run containers in background

run:

docker-compose up -d

# Show demo output

logs:

@echo "Waiting for demo to complete..."

@sleep 3

docker-compose logs controller

# Complete demo: build, run, show output

demo: clean build run logs

@echo ""

@echo "-------------------------------------------"

@echo "Demo is running. Try these commands:"

@echo ""

@echo " make shell-controller # Open shell in controller"

@echo " make shell-worker # Open shell in worker"

@echo " make clean # Stop everything"

@echo "-------------------------------------------"

# Interactive shell in controller (try running docker commands!)

shell-controller:

docker exec -it controller /bin/bash

# Interactive shell in worker

shell-worker:

docker exec -it worker /bin/bash

# Clean up

clean:

docker-compose down --remove-orphans 2>/dev/null || trueStep 6: Run the Demo

make demoExpected Output:

========================================

Docker Socket Mounting Demo

========================================

1. Listing all containers (from inside the controller container):

----------------------------------------------------------------

NAMES STATUS IMAGE

controller Up 2 seconds docker-socket-demo-controller

worker Up 3 seconds docker-socket-demo-worker

2. Executing a command in the worker container:

------------------------------------------------

worker

3. Creating a file in the worker container:

--------------------------------------------

Hello from controller!

4. Getting worker container's environment:

-------------------------------------------

PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin

HOSTNAME=worker

HOME=/root

5. Running a multi-step operation in worker:

---------------------------------------------

Step 1: Current directory is /

Step 2: Creating test data...

Step 3: Verifying...

test data Sat Nov 29 15:45:23 UTC 2025

========================================

Demo Complete!

========================================

Key insight: The controller container executed commands

in a SIBLING container (worker), not a nested one.

This works because /var/run/docker.sock is mounted,

allowing the controller to talk to the host's Docker daemon.Step 7: Explore Interactively

Open a shell in the controller and try Docker commands:

make shell-controllerInside the controller container:

# List containers - you'll see both controller and worker

docker ps

# Execute commands in worker

docker exec worker ls -la /tmp

# Inspect the worker

docker inspect worker | head -20

# View worker logs

docker logs workerReal-World Use Cases

1. Admin Dashboard (Like Our Toolbox)

# In your Flask/FastAPI app running in a container

import subprocess

@app.post("/backup")

def create_backup():

result = subprocess.run([

"docker", "exec", "postgres",

"pg_dump", "-U", "postgres", "mydb"

], capture_output=True)

return {"backup": result.stdout}2. CI/CD Runner

# GitLab Runner configuration

volumes:

- /var/run/docker.sock:/var/run/docker.sock3. Container Monitoring

// Node.js monitoring service

const { exec } = require('child_process');

function getContainerStats() {

exec('docker stats --no-stream --format "{{json .}}"', (err, stdout) => {

const stats = stdout.split('\n').map(JSON.parse);

broadcastToClients(stats);

});

}Security Considerations

Mounting the Docker socket gives a container significant power over the host. Be aware:

-

Container Escape: A container with Docker socket access can potentially escape to the host by creating a privileged container.

-

Mitigation Strategies:

- Only mount the socket in trusted containers

- Use read-only mount where possible:

/var/run/docker.sock:/var/run/docker.sock:ro - Consider Docker socket proxies like Tecnativa/docker-socket-proxy for fine-grained access control

- Run the container as non-root user

-

Production Checklist:

# More secure configuration services: controller: volumes: - /var/run/docker.sock:/var/run/docker.sock:ro user: '1000:1000' # Non-root user read_only: true # Read-only filesystem security_opt: - no-new-privileges:true

Summary

| Approach | Use Case | Complexity | Overhead |

|---|---|---|---|

| Docker Socket Mount | Control sibling containers | Low | None |

| Docker-in-Docker | Isolated Docker environments | High | Significant |

| SSH to Host | Legacy systems | Medium | Network overhead |

Docker socket mounting is the right choice when you need one container to manage others without the complexity of nested Docker environments. It’s used by CI/CD tools, admin dashboards, monitoring systems, and anywhere containers need to orchestrate other containers.

Quick Reference

Minimal docker-compose.yml:

services:

controller:

image: your-controller-image

volumes:

- /var/run/docker.sock:/var/run/docker.sockRequired in Dockerfile:

RUN apk add --no-cache docker-cli # Alpine

# or

RUN apt-get install -y docker.io # Debian/UbuntuTest it works:

docker exec controller docker ps